I’ve started encountering a problem that I should use some assistance troubleshooting. I’ve got a Proxmox system that hosts, primarily, my Opnsense router. I’ve had this specific setup for about a year.

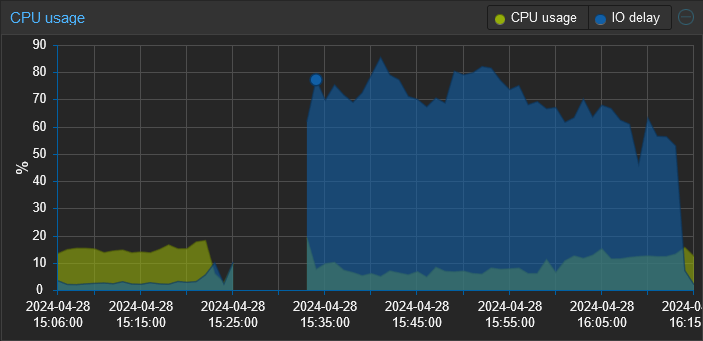

Recently, I’ve been experiencing sluggishness and noticed that the IO wait is through the roof. Rebooting the Opnsense VM, which normally only takes a few minutes is now taking upwards of 15-20. The entire time my IO wait sits between 50-80%.

The system has 1 disk in it that is formatted ZFS. I’ve checked dmesg, and the syslog for indications of disk errors (this feels like a failing disk) and found none. I also checked the smart statistics and they all “PASSED”.

Any pointers would be appreciated.

Edit: I believe I’ve found the root cause of the change in performance and it was a bit of shooting myself in the foot. I’ve been experimenting with different tools for log collection and the most recent one is a SIEM tool called Wazuh. I didn’t realize that upon reboot it runs an integrity check that generates a ton of disk I/O. So when I rebooted this proxmox server, that integrity check was running on proxmox, my pihole, and (I think) opnsense concurrently. All against a single consumer grade HDD.

Thanks to everyone who responded. I really appreciate all the performance tuning guidance. I’ve also made the following changes:

- Added a 2nd drive (I have several of these lying around, don’t ask) converting the zfs pool into a mirror. This gives me both redundancy and should improve read performance.

- Configured a 2nd storage target on the same zpool with compression enabled and a 64k block size in proxmox. I then migrated the 2 VMs to that storage.

- Since I’m collecting logs in Wazuh I set Opnsense to use ram disks for /tmp and /var/log.

Rebooted Opensense and it was back up in 1:42 min.

I’m specifically referencing this little bit of info for optimizing zfs for various situations.

Vms for example should exist in their own dataset with a tuned record size of 64k

Media should exist in its own with a tuned record size of 1mb

lz4 is quick and should always be enabled. It will also work efficiently with larger record sizes.

Anyway all the little things add up with zfs. When you have an underlying zfs you can get away with more simple and performant filesystems on zvols or qcow2. XFS, UFS, EXT4 all work well with 64k record sizes from the underlying zfs dataset/zvol.

Btw it doesn’t change immediately on existing data if you just change the option on a dataset. You have to move the data out then back in for it to have the new record size.

That cheat sheet is getting bookmarked. Thanks.

You are very welcome :)

Should the vm storage block size also be set to 1MB or just the ZFS record size?

most filesystems as mentioned in the guide that exist within qcow2, zvols, even raws, that live on a zfs dataset would benefit form a zfs recordsize of 64k. By default the recordsize will be 128k.

I would never utilize 1mb for any dataset that had vm disks inside it.

I would create a new dataset for media off the pool and set a recordsize of 1mb. You can only really get away with this if you have media files directly inside this dataset. So pics, music, videos.

The cool thing is you can set these options on an individual dataset basis. so one dataset can have one recordsize and another dataset can have another.